Whilst the default assumption may be that if you and I type the same query into Google we will get the same results, that is no longer true (if indeed it ever was....) This does of course have consequences for advice givers who say: "just Google it - it'll be the top result..."

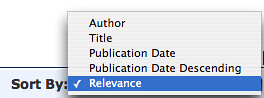

In the Library world, however, I think there is an element of search result Ground Truth, particularly in the OPAC. Given the same search terms, I suspect that the results are returned in the same order pretty much every time.

(The same is not true for federated "one search" systems over distributed databases. My experience of many of these systems is that they return results in the order they are received, with no post-ranking, which means that often the lowest quality results, returned from the smallest (though potentially responsive) databases are displayed first. And as everyone anecdotally knows, if it's too far below the fold, most people won't ever see it... that said, infinite scroll can help her, I think?)

So does it make sense to return the same results to every user of a Library catalogue? Or does it make sense to allow results to be ordered "by relevance", where relevance is determined according to the current state of the user and/or the library?

Does anyone know what ranking factors go into the Voyager "relevance" calculation by any chance? So here are a few things that I think might be interesting in terms of possible ranking factors for an OPAC ranking/relevance algorithm...

In Doodling Ideas for a Mobile Library App I described a variety of ways in which it might be possible to personally rank search results using criteria such as preferring works from libraries that are currently on-shelf, in libraries that I am allowed to use for reference, that I'm registered with, that I can register with, and so on.

The results might also be ranked according to whether they appear on a reading list for a course I am associated with, or potentially that have been borrowed by other people on the same course as I am, maybe even from previous years, or based on recommendations from MOSAIC (see, for example, JISC MOSAIC Competition Entries - Imaginings Around the Use of Library Loans Data). Or how about ranking books according to the grades of students who have borrowed them previously? Or based on patterns of borrowing, (relating my borrowing pattern to that of students who have gone before me?) Or based on "reading level', which is then correlated against my current year of study? And so on...

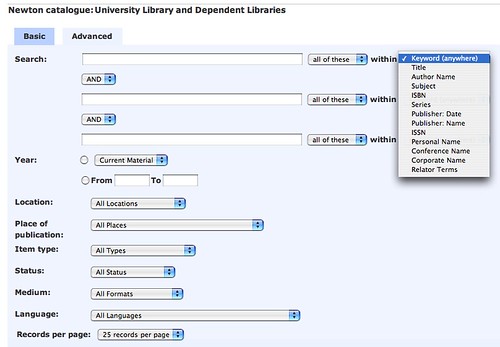

As far as thee search index goes, I would probably find it useful to be able to discover items based on a keyword search of the full text, rather than just a title and maybe a few keywords.

So how close are current typical OPACS to this, assuming that some of the more obvious, highly weighted ranking factors might be exposed in advanced search screens?

Hmmm...

To use a rather blunt turn of phrase, Google made - and continues to make - a shed load of cash through its ranking and auction algorithms, algorithms that rank content (whether that in the form of organic search results, sponsored links or AdSense adverts) that is relevant to a particular user in a highly effective way.

So I wonder - do Library folk get to tune their OPAC algorithms?

Prototyping a system need not even require the development of a ranking algorithm - a filter list can be used to report just a subset of works, e.g. based on a items listed in a reading list. (For a tangentially related example, see Mashlib Pipes Tutorial: Reading List Inspired Journal Watchlists.)

PS it's also worth considering for a moment the users, here... With the advent of the web, discovery and resource availability is no longer the sole preserve of the library. The OPAC exposes a tiny fraction of all available content, (admittedly curated content, which means that it's a just a locally convenient collection, albeit, just one of many that a typical student can now access). Surveys such as those described in Seeking Information in the Digital Age, Do Libraries Cater for Today's Undergraduate Students?, and Do Libraries Cater for Today's Researchers and Research Students? point at a present (not even a possible future) in which library users go elsewhere. The OPAC is just one place of many to look for information. In fact, if I was to be contentious, I would argue that the only significant thing an OPAC is good for is discovering the availability and location of known items within a particular library (ducks...;-).

Secondarily, it may also turn up items loosely related to a particular topic, based on the presence of search terms in metadata, or keywords in titles or subject classifications; but just bear in mind that subject classifications tend to reflect the preferences of the individual cataloguer and the controlled vocabulary they used, rather than the more general index that an information retrieval system based on text analysis system, and that most library users don't speak in subject classification language... (Maybe that's why asking what words to use is one of the few things that library users ask librarians for help with (Seeking Information in the Digital Age)?!

2 comments:

Happy to show you the ranking control, for what it is worth.

To my mind, the main reason we don't reguarly tune it is that unlike websites wher HTML tags and their content can vary vastly in use, the data elements of a MARC record remain fairly constant in usage and content. An author field is an author field, and it usually contains a name. Furthermore

So unlike Google, there is no real need to constantly invest in and rethink relevancy, as MARC data is usually static in form.

The setup Cambridge's OPAc leans heavily away from keyword searching

and focuses upon left anchored index browsing.

(I'm not saying any of the above is a good thing, thats just how it is)

This approach fits the known item searching model, which I personally tend to agree with. Discovery these days often happens outside the catalogue. (possibly in keyword based systems with better relvancy ranking ...)

In Cambridge, its often based upon a reference, suggestion or list.

An OPAC that used circulation information to inform relevancy would be interesting, although possibly somewhat self-fullfilling...

" the data elements of a MARC record remain fairly constant in usage and content"

Yes, but I'm suggesting that the needs of individual users differ. So a fixed rank, "ground truth" SERP may not actually be that useful compared to results pages that rank results according to availability of books, whether the library is open or whether an ebook is available etc (remember, folk want instant fulfilment now...) let alone the personal preferences and situation of the user?

"This approach fits the known item searching mode"- agreed, which is why "I would argue that the only significant thing an OPAC is good for is discovering the availability and location of known items within a particular library" ;-)

Post a Comment